Mastering Projects

A mastering project helps organizations find records that refer to the same entity within and across input datasets.

Purpose and Overview

In a mastering project, you "deduplicate" the unified dataset by identifying characteristics that indicate record similarity or difference, then review and validate pairs and clusters of records. This effort is often referred to as data mastering, entity resolution, or record linkage.

Data mastering is one of the major workflows you can use in:

- Data unification projects, such as Customer Data Integration (CDI) and Master Data Management (MDM).

- Matching projects, such as entity detection and enrichment.

High-Level Stages in a Mastering Workflow

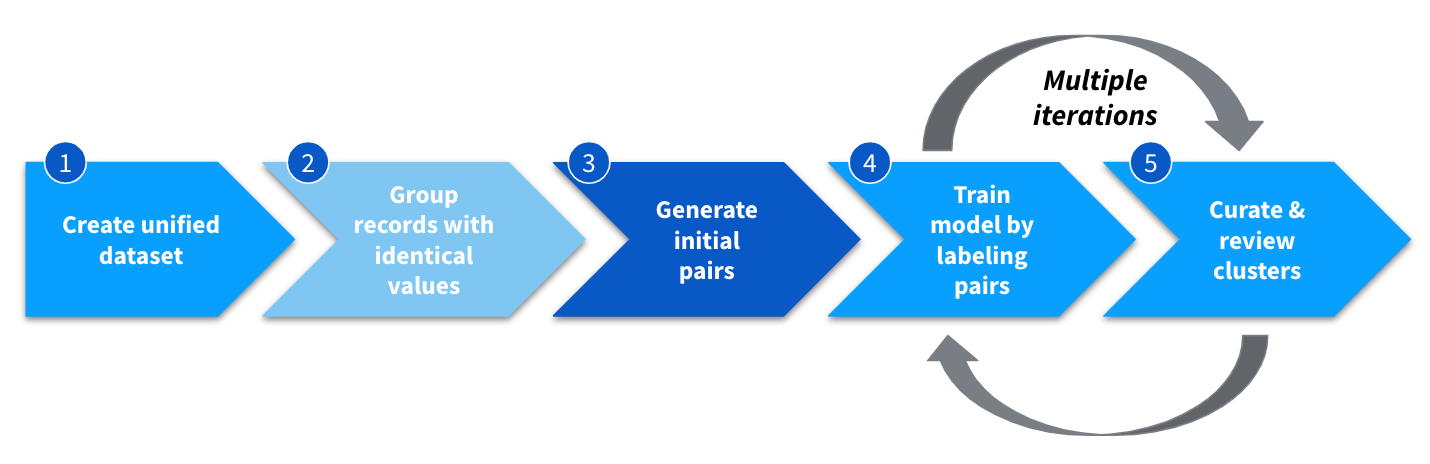

In a mastering project, you create a unified dataset, optionally identify obviously-matching records and group them, and then generate an initial set of pairs that you identify as matching or non-matching. You then iteratively run the machine learning model to improve the accuracy of the resulting pairs and clusters, and then refine the clusters.

The process of data mastering. Stage 2 is a lighter shade to indicate that it is optional. Stage 3 is a darker shade to indicate that Tamr Core completes this stage.

This topic gives a high-level introduction to these stages:

- Stage 1: Begin creating a unified dataset

- Stage 2 (Optional): Group obvious duplicates

- Stage 3: Generate initial pairs to identify matches

- Stage 4: Train the matching model by labeling pairs

- Stage 5: Curate and review record clusters

Note: If enabled, you can also include data enrichment as part of the mastering workflow. See Managing Enrichment Projects.

Stage 1: Begin Creating a Unified Dataset

The initial stage of a mastering project is similar to that of a Schema Mapping Projects: an admin or author creates the project and uploads one or more input datasets. As in a schema mapping project, curators then map attributes in the input datasets to attributes in the unified schema.

Curators then complete additional configuration in the unified schema that is specific to mastering projects:

- Identify the single logical entity, such as people, customers, or products, for mastering.

- Indicate which of the unified attributes contribute to finding similarities and differences among the records.

- Specify the tokenizers and similarity functions for machine learning models to use when comparing data values and finding similarities and differences.

- Optionally set up transformations for the data in the unified dataset.

See Creating the Unified Dataset for Mastering.

Stage 2 (Optional): Group Obvious Duplicates

For datasets that are known to contain duplicates, and that include attributes that can reliably identify unique entities, curators can enable the record grouping feature. Tamr Core organizes records that have identical values for all of the selected "grouping key" attributes into groups. For the other attributes, curators choose an aggregation function to apply to the set of values that might be found.

Applying this stage to one or more of the input datasets can make later stages in the mastering workflow more efficient. See Grouping Obvious Duplicates.

Stage 3: Generate Initial Pairs to Identify Matches

Curators use their knowledge of the data to create a "blocking model." A blocking model uses one or more of the unified attributes to filter out pairs of records that obviously do not match each other. An example for mastering data for individuals might be, last_name must be at least 90% similar and phone must be at least 75% similar for two records to be a potential match. See Defining the Blocking Model.

From the blocking model, Tamr Core generates an initial set of pairs that pass the filter. A verifier or curator evaluates a random sample of the pairs and labels them as being either a Match or No Match. This labeling effort provides additional input for the machine learning model to use in finding similarities between records. See Training Initial Pairs.

Stage 4: Train the Matching Model by Labeling Pairs

After a curator provides the initial training sample and updates the model with this feedback, the machine learning model generates additional sets of pairs that include a match or no match suggestion and a level of confidence for that suggestion. Curators or verifiers assign these pairs to reviewers so that they can contribute their expertise in identifying correct and incorrect matching pairs and correct and incorrect non-matching pairs. Curators and verifiers then validate the work of the reviewers and accept or reject their input. See Curating Pairs (curators) and Viewing and Verifying Pairs (verifiers).

The first time it runs to apply the initial training sample and generate suggestions for pairs, the machine learning model also generates record clusters. Tamr Core automatically assigns a baseline cluster ID to each cluster. Stage 5 can begin while stages 3 and 4 are ongoing.

Stage 5: Curate and Review Record Clusters

Because more than two records can refer to the same real-world entity, the next stage in a mastering project uses all of the input about pairs and record groups to cluster all matching records together. See Working with Clusters.

The clustering process:

- Identifies records that refer to the same real-world entity.

- Puts all of those records, and only those records, into a cluster.

Curators and verifiers review and validate clusters, merging them together if they contain records for the same entity, or splitting them if they contain records for different entities. The result is clusters of records that each correspond to a different unique entity. See Verifying Clusters.

Curators periodically “publish” clusters to reflect changes to the blocking model, pair labels, or the clusters themselves. This process updates the cluster IDs and allows for comparison to the initial, baseline clusters and subsequent published snapshots. Curators can use cluster metrics for precision and recall to evaluate whether the machine learning model is improving as a result of cluster curation. See Precision and Recall Metrics.

When you are confident that your mastering project groups records into clusters accurately, you can:

- Create a single record for each unique entity from the best available data in records with the same cluster ID. See Golden Records Projects.

- Use the cluster information as a key in other systems.

For more information, see User Roles and Tamr Core Documentation.

Updated about 2 years ago