Working with Clusters

Curators organize records into clusters that contain all of the records that refer to the same real-world entity.

When you apply feedback and update pairs, Tamr Core also generates the first iteration of record clusters. A cluster can contain one or more records, all of which should represent the same distinct entity. In a given data mastering project, cluster size ranges from one record, known as a singleton cluster, to thousands or tens of thousands of records in a cluster.

Note: At initial cluster generation, Tamr Core assigns all records that are in a record group to the same cluster. You can override these assignments and move records into different clusters; however, this might indicate that a review of your grouping keys is needed.

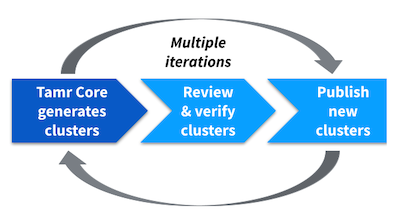

The iterative review and curation of important clusters allows the model to accurately and automatically cluster records into distinct entities. Step 1 is a darker shade of blue to indicate that Tamr Core completes this step.

To achieve one record cluster for each entity, containing all records for that entity and only records for that entity, you and your experts review a small number of important clusters and take the following actions to improve the model:

- Merge any clusters that contain records for the same entity.

- Move records from a cluster for a different entity into an existing cluster for that entity.

- Separate records into a new cluster for an entity that does not already have a cluster.

- Verify records as correctly belonging to a cluster. When you verify each record's membership in a cluster you can choose whether the model can use that verified membership to make suggestions about future cluster members.

After you review high-impact clusters, you can generate precision and recall metrics to help you track model accuracy over time.

Tip: The first time that you initiate an Apply feedback and update results or Update results only job in a mastering project, Tamr Core “publishes” the initial set of clusters by assigning persistent IDs. As you work with clusters, you choose when to manually republish by running a Review and publish clusters job. This job assigns persistent IDs to any new clusters and deletes any empty clusters. Each time you republish, Tamr Core saves a snapshot of the clusters and recomputes recall and precision metrics.

The iterative curation of important clusters allows the model to accurately cluster all records into distinct entities.

Learned Pairs

When you enable this feature in a project, Tamr Core can learn how to create new pairs and pair label suggestions based on your cluster verifications. The changes you make to your clusters can provide additional feedback to the model, resulting in new pair labels with potentially higher confidence levels. Adding this feature to a project can reduce the need to manually label pairs by leveraging the expertise provided on the Clusters page. Tamr Core does not override any expert feedback already provided on the Pairs page. This feature can create new pairs in addition to the pairs generated from the blocking model.

Enabling Learned Pairs

Before you begin:

- Define your blocking model.

- Ensure you have run the Update Unified Dataset job at least once in your project. You cannot enable this feature until after you run Update Unified Dataset.

To enable learned pairs:

- Navigate to the home page.

- Move your cursor over the top right corner of the project tile and then select Edit

.

. - Under the setting What is the maximum amount of Learned Pairs that should be generated per cluster, select a maximum number of pair labels (greater than 0) Tamr Core can learn from your verifications within a single cluster.

Note: Tamr suggests setting this field to 10. See the recommended setting. - Select Save.

- On the Pairs page, select Manage pair generation > Generate pairs, to begin training the model with learned pairs.

Recommended Setting for Maximum Learned Pairs

To avoid biasing training data, the value you supply limits the number of records within a given cluster that Tamr Core can provide labels for based on expert feedback. Tamr recommends setting this field to 10.

The maximum number of learned pairs determines the weight each cluster has on Tamr Core’s machine learning model. Setting this field too low might not provide the model with enough training data, while setting the field too high might cause larger clusters to bias the model.

In projects where you set the What is the maximum amount of Learned Pairs that should be generated per cluster field, Tamr recommends that curators audit learned pairs on the Pairs page. To do this, filter to Inferred and Learned Pairs.

The default value for this field is 0, which results in leaving this feature disabled for your project.

Curators and verifiers can review, filter, assign, and verify clusters. See the following:

Updated about 2 years ago