Maintaining Availability in AWS Cloud Native Deployments

Component Deployment to Maintain Availability

Other than the core EC2 instance, the components in a Tamr AWS cloud native deployment are deployed in multiple to increase availability and/or performance.

These components can be deployed across up to 4 subnets, as follows:

- Application subnet (1): hosts the EC2 Instance where the Tamr application is deployed (also known as Tamr VM).

- Compute subnet (1): hosts the Amazon EMR clusters and is launched in the same AZ as the Application subnet.

- Data subnets (2): used for deploying a Multi-AZ PostgreSQL Relational Database Service (RDS) instance and a Multi-AZ Amazon ElasticSearch (ES) Service domain.

Tamr recommends against deploying EC2 and EMR resources across multiple regions or availability zones due to connection latency between locations.

For more information on best practices to maintain availability in the event of a disaster, see the Disaster Recovery of Workloads on AWS: Recovery in the Cloud whitepaper.

Service Limit Considerations

The ability to apply these mitigation strategies depends on AWS service limits permitting the required resources to be provisioned. See the Tamr Deployment Tamr on AWS > Limits on AWS Services documentation for more details on AWS service limits.

Recovering from Availability Zone or Region Failure

Important: Configure and test the recovery steps in this section soon after the initial deployment is completed and operational.

Depending on the disaster event, a restore may be required to return the deployment to a known state, as described in the table below.

| Disaster Event | Cloud Native Component Migration Strategy |

| Availability zone goes down | Recover to a deployment in an alternate availability zone. Full restore may not be required. |

| Region goes down | Recover to a deployment in an alternate region. Full restore required. |

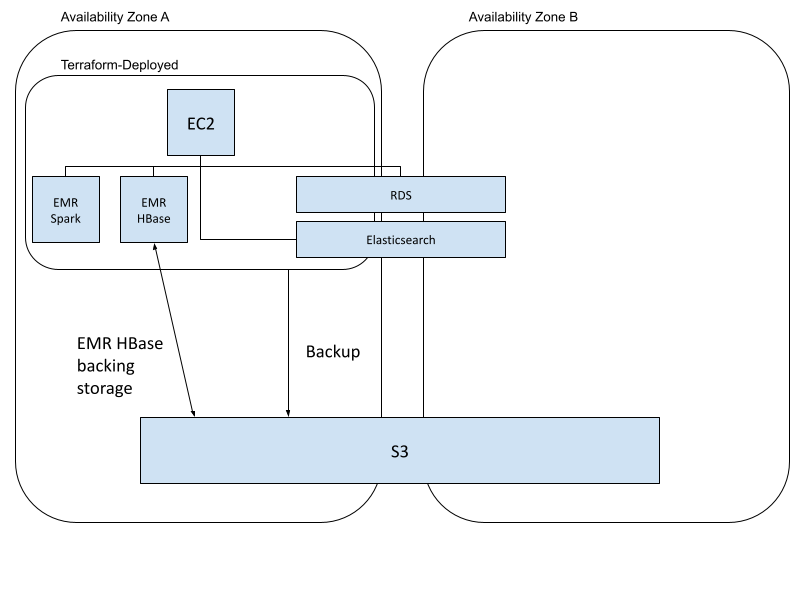

Recovery across Availability Zones in the Same Region

Initial deployment within an availability zone:

- Terraform is used to provision the Tamr and AWS components. In this example, RDS and Elasticsearch are deployed across multiple availability zones. (See the following Amazon documentation: High availability (Multi-AZ) for Amazon RDS and Amazon EMR now supports Multiple Master nodes to enable High Availability for HBase clusters).

- Tamr is backed up to an S3 bucket.

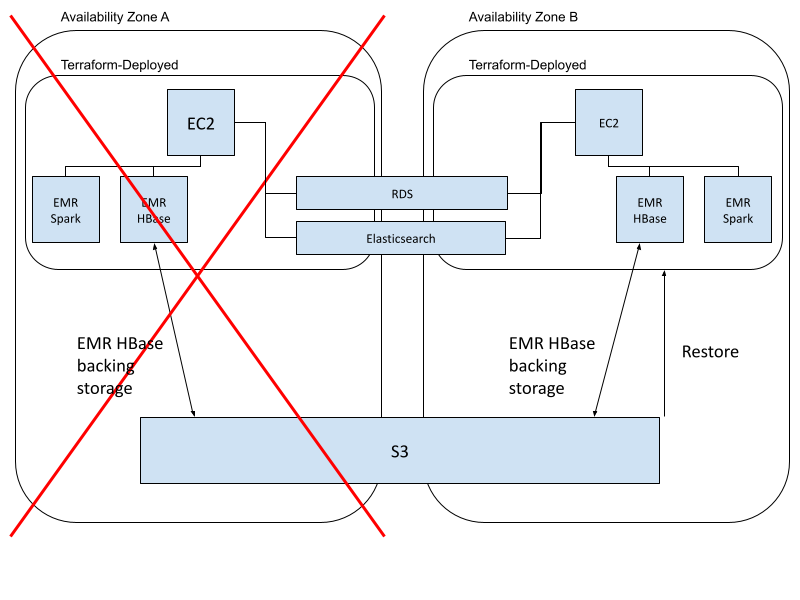

In the event of an availability zone failure:

-

Use terraform to provision a new instance of Tamr and AWS components in a new availability zone in the same region. (See the Tamr Deploying Tamr on AWS > Installation Process documentation.)

RDS and Elasticsearch are already present in the new availability zone; distribute these components across an additional availability zone in the region.

-

Verify the new deployment is working. (See the Tamr Deploying Tamr on AWS > Verify Deployment documentation.)

-

Because Tamr’s storage is backed by a S3 bucket available to the whole region, the existing S3 bucket for the region will still be in place and can be used. (See the AWS S3 Storage Class documentation.) Perform a restore from a working backup of the original Tamr deployment. (See the Tamr AWS Backup and Restore documentation.)

Users now connect to the new Tamr instance.

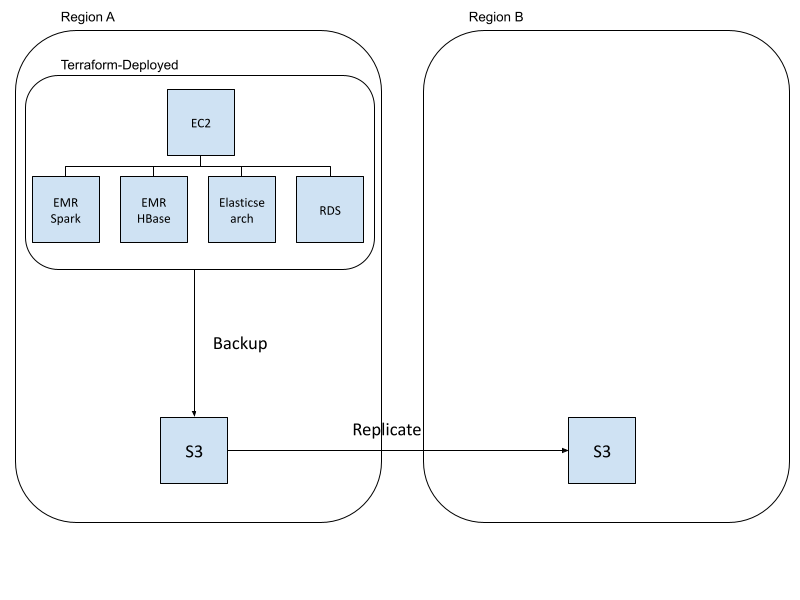

Recovery across Regions

Initial deployment within a region:

- Terraform is used to provision the Tamr and AWS components.

- Tamr is backed up to a S3 bucket.

- The S3 bucket is replicated to another region. (See the AWS Replicating Objects documentation.)

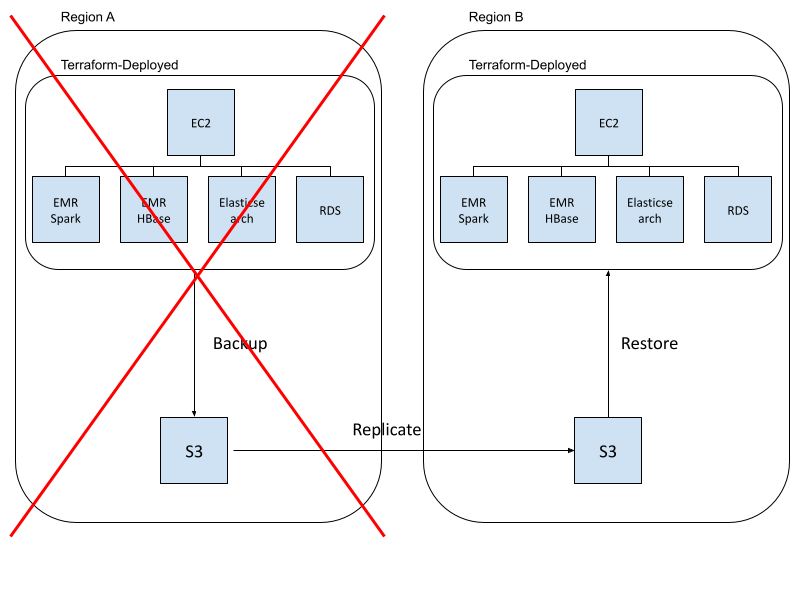

In the event of a region failure:

-

Use terraform to provision a new instance of Tamr and AWS components in a new region (See the Tamr Deploying Tamr on AWS > Installation Process documentation.)

-

Verify the new deployment is working. (See the Tamr Deploying Tamr on AWS > Verify Deployment documentation.)

-

Restore Tamr to the new instance using the backup from the replicated S3 bucket. (See the Tamr AWS Backup and Restore> Restore Cloud-Native Deployment documentation.)

Users now connect to the new Tamr instance.

Backup and Restore

See the Tamr AWS Backup and Restore documentation for the steps to backup and restore an AWS cloud-native Tamr installation.

Important: Tamr backups are stored on an S3 bucket located within a single region. Replicate S3 buckets used to store backups to an additional region in order to mitigate data loss in the event of a region failure. (See the AWS Replicating objects

The time to restore varies with the configuration and workload of the Tamr instance; an <8-hour recovery time objective (RTO) and <24 hour recovery point objective (RPO) are generally possible.

Updated over 2 years ago